A self-hosted, on-premises AI workspace for our policy consultancy. Local LLMs, local document ingestion, and effectively airgapped - so confidential client work never leaves the building.

The problem

For a policy-writing business, document security is non-negotiable - we simply cannot feed sensitive client material into public cloud models. Off-the-shelf AI tools either send everything to a US-hosted API, or come with enterprise pricing and lock-in. We wanted the productivity of modern AI without any of the data-sovereignty trade-offs.

Our approach

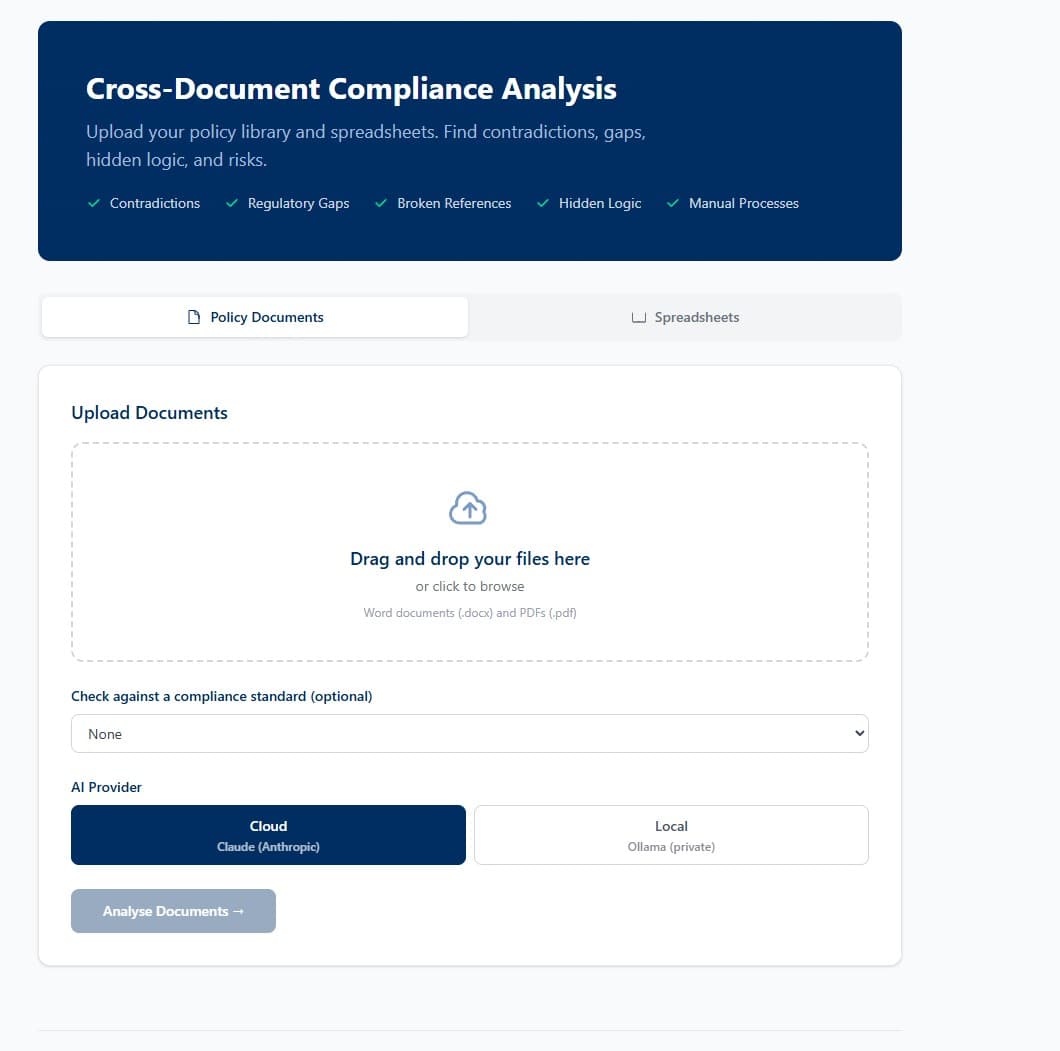

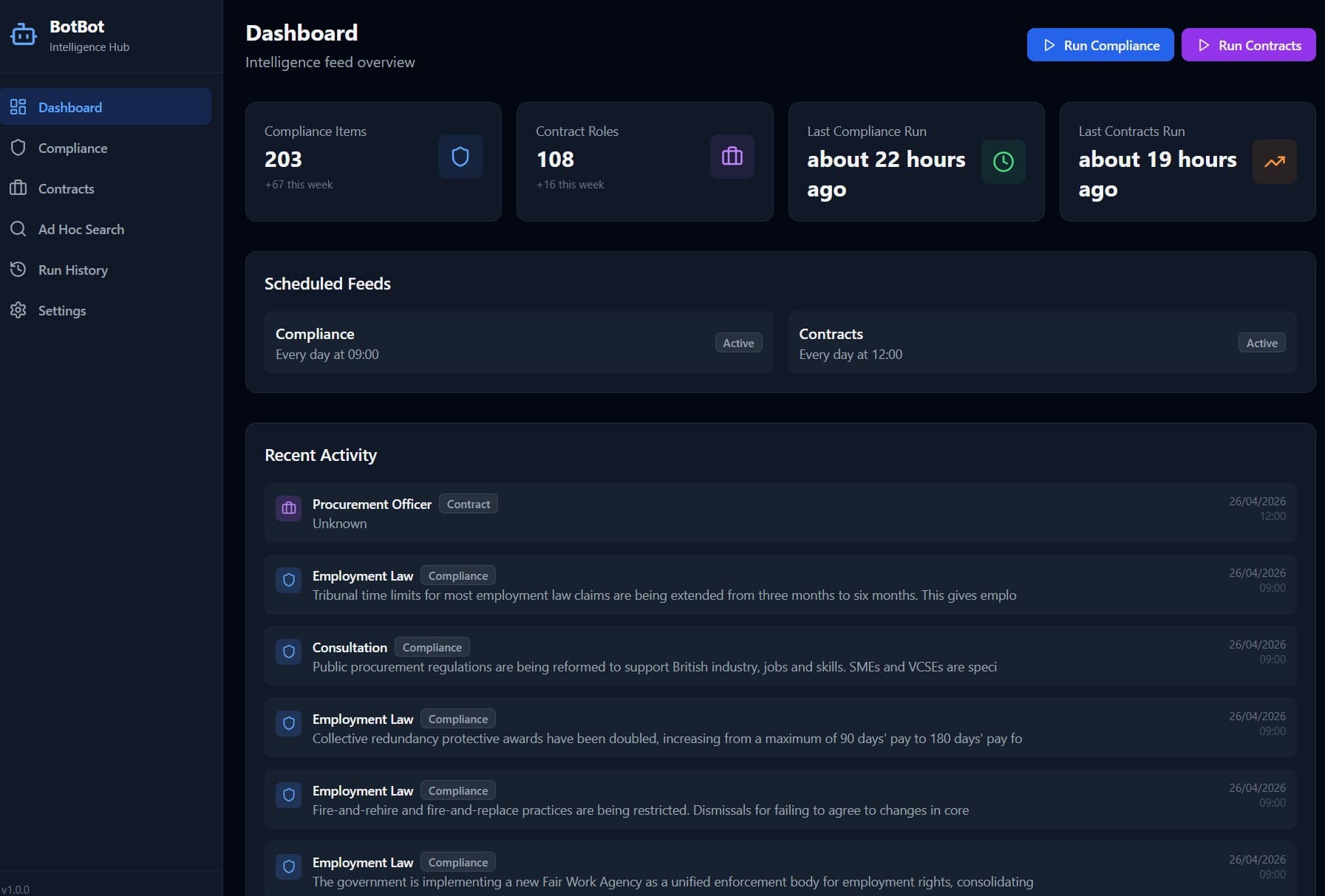

We built a fully self-hosted AI interface that runs on local hardware. Models are served by Ollama and can be swapped at will. A Python ingestion pipeline handles documents, images (OCR) and audio (transcription), embedding everything into a local vector database for high-speed semantic retrieval. A custom Node.js front-end ties it together with a clean tabbed interface (Chat, Compliance, Knowledge, Compare, Transcribe, Agent), exposed remotely via a Cloudflare tunnel with role-based access. Aside from a single optional web-query agent that fetches the latest compliance information, no data ever leaves the premises.

What it does

Local LLM inference

Ollama runs models on our own hardware. Swap between models per task - small and fast, or large and capable.

Multi-modal ingestion

Python RAG pipeline ingests documents, images (with OCR) and audio (with transcription) into a unified searchable corpus.

Vector search at speed

Local vector database for semantic search and retrieval - find the right passage in a 5,000-page document set in milliseconds.

Autonomous compliance agent

Agent tab can check the date, search the compliance corpus, and reason across internal policies vs current regulation - autonomously, with sources cited.

Remote access without exposure

Cloudflare tunnel for secure remote access. Role-based access control for admin and standard users.

Versioned, automated backups

Automated ops handle granular, versioned backups so the knowledge base is always recoverable.

Tech stack

Outcomes

- →Zero client data sent to public cloud LLMs

- →Effectively airgapped - only an optional web-query agent reaches outside

- →Replaces multiple paid AI tools with a single self-owned platform

- →Demonstrates that data-sovereign AI is realistic for SMEs in 2026

Who this is for

Law firms, policy consultancies, healthcare providers, advisory businesses and anyone for whom "don't send our documents to OpenAI" is a hard requirement.